The line between our online identities and real-world presence is becoming thinner than many people realize.

I recently came across a privacy story about someone being discovered through a private Instagram burner account after briefly being seen on a bus. At first, the situation sounds impossible. How could a stranger find a private account with no real name or profile picture?

From a cybersecurity perspective, however, the explanation is much more realistic than many people think.

Modern platforms collect and correlate enormous amounts of data, including:

- shared WiFi networks

- location proximity

- synced contacts

- device fingerprints

- phone numbers

- behavioral patterns

- facial recognition data

Even when users believe they are anonymous online, metadata can still connect identities together.

The Power of Metadata

One of the biggest lessons from this story is that privacy is no longer only about what we intentionally share. Today, metadata plays a major role in digital identification.

Metadata can include:

- where your device was located

- which networks you connected to

- who is in your contact list

- what device you use

- how your accounts interact online

Individually, these details may seem harmless. Combined together, they create a digital profile that can reveal much more than expected.

This concept is commonly related to:

- OSINT (Open Source Intelligence)

- identity correlation

- behavioral tracking

- social engineering

- digital surveillance

Are “Burner Accounts” Really Anonymous?

Many people assume that creating a second account automatically protects their identity.

In reality, accounts can still become linked if users:

- use the same phone number

- sync contacts

- log in from the same device

- connect through shared recovery emails

- allow location services

This means that even accounts designed to appear anonymous may still leave enough digital breadcrumbs to be identified.

Facial Recognition Changes Everything

The article also discussed how facial recognition tools can increase privacy risks even further.

Modern search engines and AI-powered recognition systems can analyze publicly available images and connect them to:

- social media profiles

- websites

- online posts

- public databases

As these technologies continue to evolve, the boundary between online and offline identity becomes increasingly blurred.

Cybersecurity Beyond Hacking

Stories like this are important because they show that cybersecurity is not only about hacking systems or malware analysis.

Cybersecurity also involves understanding:

- digital footprints

- privacy exposure

- metadata

- surveillance risks

- human behavior online

The convenience offered by modern platforms often comes at the cost of personal privacy.

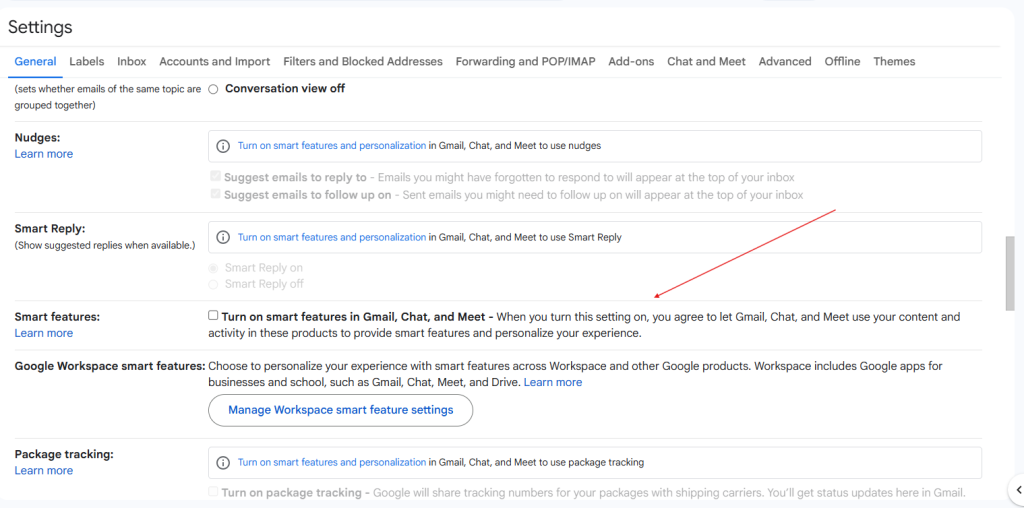

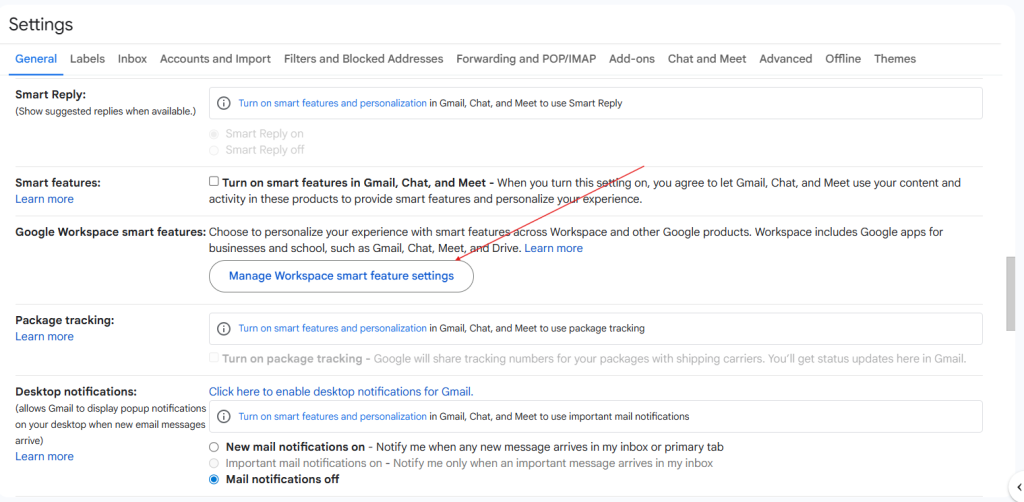

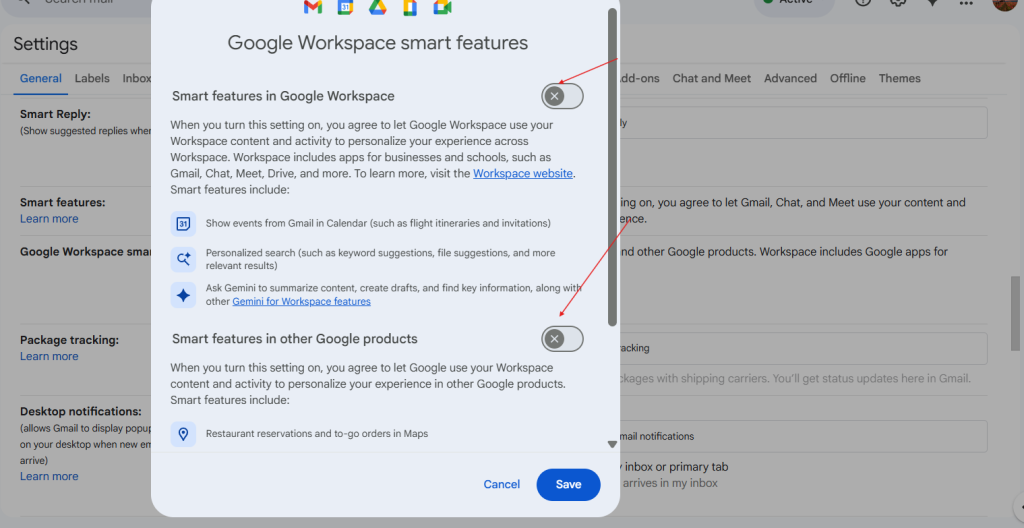

How Users Can Better Protect Their Privacy

While complete anonymity online is difficult, users can reduce exposure by:

- disabling unnecessary location sharing

- avoiding public WiFi for sensitive activity

- turning off contact syncing

- separating phone numbers between accounts

- reviewing app permissions regularly

- being cautious about images shared publicly

Final Thoughts

Technology is becoming increasingly capable of connecting digital identities with real-world behavior.

What once sounded like science fiction is quickly becoming part of everyday life.

As someone interested in cybersecurity, I find this topic both fascinating and concerning because it demonstrates how small digital traces can reveal much more than people expect.

Understanding digital privacy is no longer optional — it is becoming an essential cybersecurity skill.

Source & Inspiration:

Article: “Stranger danger? Here’s how a random person on the bus can still find you on Instagram” by Cybernews.